As artificial intelligence systems continue to evolve, organizations increasingly depend on high-quality annotated datasets to train machine learning and natural language processing models. From healthcare chatbots and financial analytics platforms to legal document automation and customer support systems, text annotation has become a foundational process in AI development. However, as enterprises gather and label massive volumes of textual data, concerns around privacy, confidentiality, and regulatory compliance have intensified.

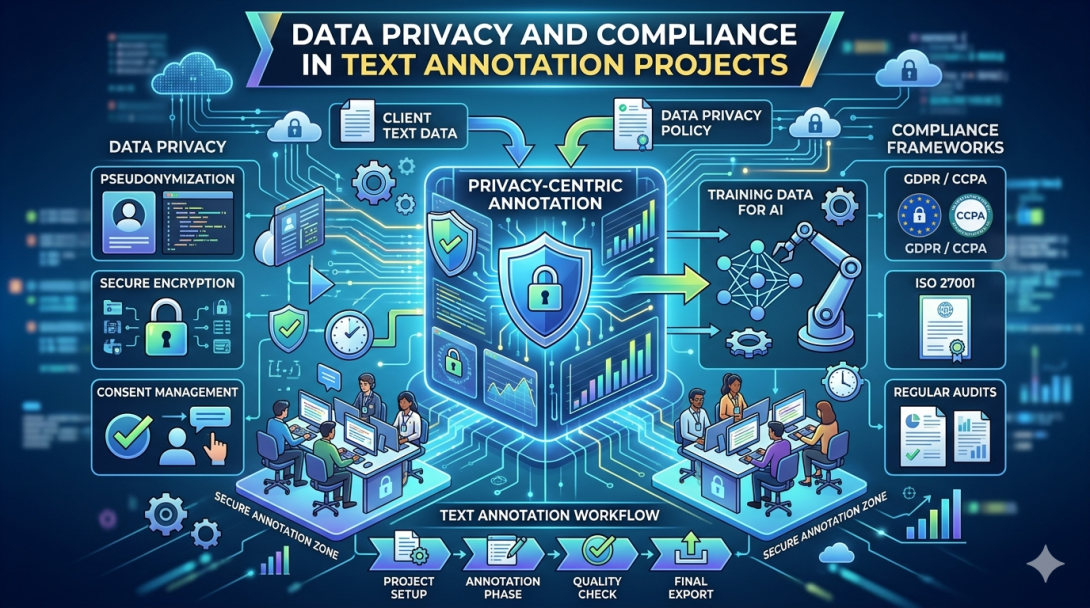

For every modern AI initiative, protecting sensitive information is no longer optional. Businesses must ensure that annotation workflows comply with strict data protection standards while maintaining annotation accuracy and scalability. This is where a reliable data annotation company plays a critical role in building secure, compliant, and trustworthy annotation ecosystems.

Why Data Privacy Matters in Text Annotation

Text annotation projects often involve sensitive or personally identifiable information (PII). Annotators may encounter names, addresses, medical records, financial transactions, emails, legal contracts, customer conversations, or confidential business information during the labeling process. If this data is mishandled, organizations may face legal penalties, reputational damage, and security breaches.

Consequently, enterprises implementing AI solutions must ensure that annotation environments include strong privacy safeguards. Whether companies manage projects internally or through data annotation outsourcing, maintaining confidentiality throughout the annotation lifecycle is essential.

Moreover, users are becoming increasingly aware of how their data is collected and processed. Therefore, AI companies must demonstrate responsible data governance practices to build customer trust and meet regulatory expectations.

Common Privacy Risks in Annotation Projects

Although text annotation accelerates AI model training, it also introduces several security and compliance risks. Understanding these challenges helps organizations implement better protection mechanisms.

Exposure of Personally Identifiable Information

Many datasets contain personal details such as names, phone numbers, account numbers, or identification records. Without proper anonymization, annotators may unintentionally access sensitive information.

Unauthorized Data Access

If annotation systems lack role-based access controls, unauthorized individuals may gain access to confidential datasets. This risk increases significantly in large-scale text annotation outsourcing environments involving distributed teams.

Data Leakage During Transmission

Data transferred between clients, annotation platforms, and remote teams can become vulnerable if encryption protocols are weak or outdated.

Third-Party Compliance Failures

Organizations relying on external annotation vendors must ensure that third-party partners comply with industry regulations. A non-compliant vendor can expose the entire project to regulatory violations.

Inadequate Data Retention Policies

Storing annotation data longer than necessary may increase exposure risks. Secure deletion and lifecycle management policies are essential for minimizing vulnerabilities.

Key Compliance Regulations Affecting Text Annotation

Today, AI organizations operate in a global regulatory landscape where data protection laws continue to evolve. Therefore, every text annotation company must align its operations with major compliance frameworks.

General Data Protection Regulation (GDPR)

The GDPR governs how organizations handle personal data within the European Union. It emphasizes transparency, user consent, data minimization, and the right to erasure. Annotation providers working with EU data must implement strict safeguards to comply with GDPR requirements.

Health Insurance Portability and Accountability Act (HIPAA)

Healthcare AI projects frequently involve patient records and medical conversations. HIPAA compliance ensures that protected health information remains secure during annotation workflows.

California Consumer Privacy Act (CCPA)

The CCPA provides California residents with rights regarding data collection and processing. Companies conducting annotation projects involving U.S. consumer data must maintain transparency and privacy controls.

ISO 27001 Standards

ISO 27001 certification demonstrates that an organization follows internationally recognized information security management practices. Many enterprises prefer partnering with a text annotation company that adheres to these standards.

PCI DSS Compliance

Financial annotation projects involving payment information must comply with PCI DSS requirements to secure sensitive transaction data.

Best Practices for Secure Text Annotation Workflows

To reduce privacy risks and maintain compliance, organizations should adopt comprehensive security practices throughout the annotation pipeline.

Data Anonymization and Masking

Before datasets reach annotators, sensitive information should be anonymized or masked whenever possible. Techniques such as tokenization, pseudonymization, and redaction help protect user identities without compromising annotation quality.

For example, customer names may be replaced with placeholders while preserving sentence context for NLP training.

Role-Based Access Controls

Not every team member requires access to all project data. Implementing role-based permissions limits exposure and ensures that users only access information necessary for their tasks.

Additionally, activity logs and monitoring systems help organizations track user actions and identify suspicious behavior.

Secure Annotation Platforms

Annotation platforms should support encrypted data transmission, multi-factor authentication, secure APIs, and controlled access environments. Cloud-based infrastructure must also comply with recognized security certifications.

A professional data annotation company typically invests heavily in secure infrastructure to protect enterprise datasets.

Confidentiality Agreements and Workforce Training

Human annotators remain a critical component of AI training workflows. Therefore, organizations must ensure that annotators understand privacy responsibilities and compliance obligations.

Regular security awareness training, signed NDAs, and internal compliance policies help minimize human-related risks.

Secure Data Storage and Deletion

Organizations should establish clear retention and deletion policies to prevent unnecessary storage of sensitive information. Once annotation tasks are complete, datasets should be archived securely or permanently deleted according to compliance guidelines.

Audit Trails and Documentation

Maintaining detailed records of data access, annotation activity, and security controls supports regulatory audits and demonstrates accountability. Documentation also helps enterprises verify vendor compliance during partnerships.

The Role of Data Annotation Outsourcing in Compliance

Many enterprises choose data annotation outsourcing to scale AI projects efficiently and reduce operational costs. However, outsourcing introduces additional compliance considerations that organizations must evaluate carefully.

Selecting the right annotation partner becomes essential because the vendor directly handles sensitive business data. Consequently, businesses should assess several factors before outsourcing annotation operations.

Security Infrastructure

Organizations should evaluate whether the vendor uses secure annotation environments, encrypted communication channels, and restricted access systems.

Compliance Certifications

A trustworthy text annotation company should maintain relevant certifications and demonstrate adherence to global compliance standards.

Transparent Processes

Clear documentation regarding data handling, storage, retention, and deletion policies helps organizations assess operational transparency.

Workforce Management

Enterprises should verify whether annotators receive compliance training and whether the vendor enforces confidentiality agreements across all teams.

Geographic Considerations

Data sovereignty regulations may restrict where data can be processed or stored. Therefore, organizations should ensure that outsourced annotation operations align with regional legal requirements.

When implemented correctly, text annotation outsourcing enables businesses to scale securely while maintaining strong data governance practices.

Industry-Specific Privacy Challenges

Different industries face unique privacy and compliance challenges during annotation projects.

Healthcare

Medical AI systems require annotation of clinical notes, prescriptions, patient histories, and diagnostic records. HIPAA compliance and patient confidentiality are critical priorities.

Finance

Financial institutions process highly sensitive transaction data, customer communications, and fraud detection records. Secure annotation practices help prevent financial data exposure.

Legal Services

Legal AI applications involve contracts, litigation documents, and confidential case information. Annotation providers must maintain strict confidentiality and access controls.

E-commerce and Customer Support

Customer conversations, feedback, and purchase histories often contain personal information. Compliance with consumer privacy laws becomes essential for these projects.

How Annotera Supports Secure Annotation Projects

At Annotera, data privacy and compliance are central to every annotation workflow. As a trusted data annotation company, Annotera combines advanced security practices with scalable annotation expertise to help enterprises build reliable AI systems safely.

Our teams follow strict confidentiality protocols, secure access management procedures, and compliance-driven workflows designed to protect sensitive information across all project stages. Additionally, our annotation environments support secure collaboration, encrypted data handling, and quality assurance monitoring.

Through responsible data annotation outsourcing, Annotera enables organizations to scale AI development while maintaining compliance with industry regulations and enterprise security requirements.

Conclusion

As AI adoption accelerates globally, organizations must prioritize data privacy and regulatory compliance throughout their annotation operations. Text annotation projects frequently involve sensitive information, making security and governance essential for sustainable AI development.

By implementing anonymization techniques, secure infrastructure, access controls, workforce training, and compliance monitoring, enterprises can significantly reduce operational risks. Furthermore, partnering with an experienced text annotation company ensures that annotation workflows remain secure, scalable, and regulation-ready.

In an increasingly regulated digital environment, businesses that invest in compliant annotation practices will build stronger AI systems, earn customer trust, and create long-term competitive advantages. Through reliable data annotation outsourcing, organizations can confidently advance AI innovation while safeguarding valuable data assets.